An introduction to my VR passion project of creating a Virtual Reality Recording Studio in Unity3D

Preface

Hi there!

Let me tell you about my journey into the world of virtual reality. Back in 2013, I was given an amazing opportunity to work on a virtual reality experience for an indoor attraction park. It was an exciting time, but there were a lot of challenges to overcome. The Oculus DK1 hadn’t been released yet and the room-scale VR solution hadn’t even been invented!

Despite these obstacles, I put in a lot of hard work and research, trying out different hardware and software solutions until we finally built a markerless motion-tracking system for up to 4 people and a modified DK1 with a laptop in a backpack to create 6 DOF room-scale VR. It was a huge achievement, but unfortunately, the project had to come to an end after two years as the VR equipment and software just weren’t up to par for our client.

But the experience I gained from this project was invaluable. I learned about markerless motion capture, the Unity3D game engine, and the endless possibilities of virtual reality. It was only a matter of time before I started exploring opportunities to create my own VR project.

In 2015, I finally took the plunge and decided to create something that could invoke creativity – a Virtual Reality Recording Studio! The first step was to do research and see if it was possible to create a working mixing desk in a game engine. After a successful test, I spent time improving my Unity, programming, and 3D modelling skills while working on other projects for various clients.

Now, I was ready to take on this new VR project and continue to push the boundaries of what’s possible in this amazing technology

Click to view: Virtual Reality Recording Studio Webstory

Join our discussion and download one of the first alpha versions to try on Steam VR on my discord server

https://discord.gg/zQ7zcprs4N

A development blog article about the creation and building the application VR Audio Engineer, where people can learn and experience mixing in a virtual recording studio.

Unity3D development

Prototype

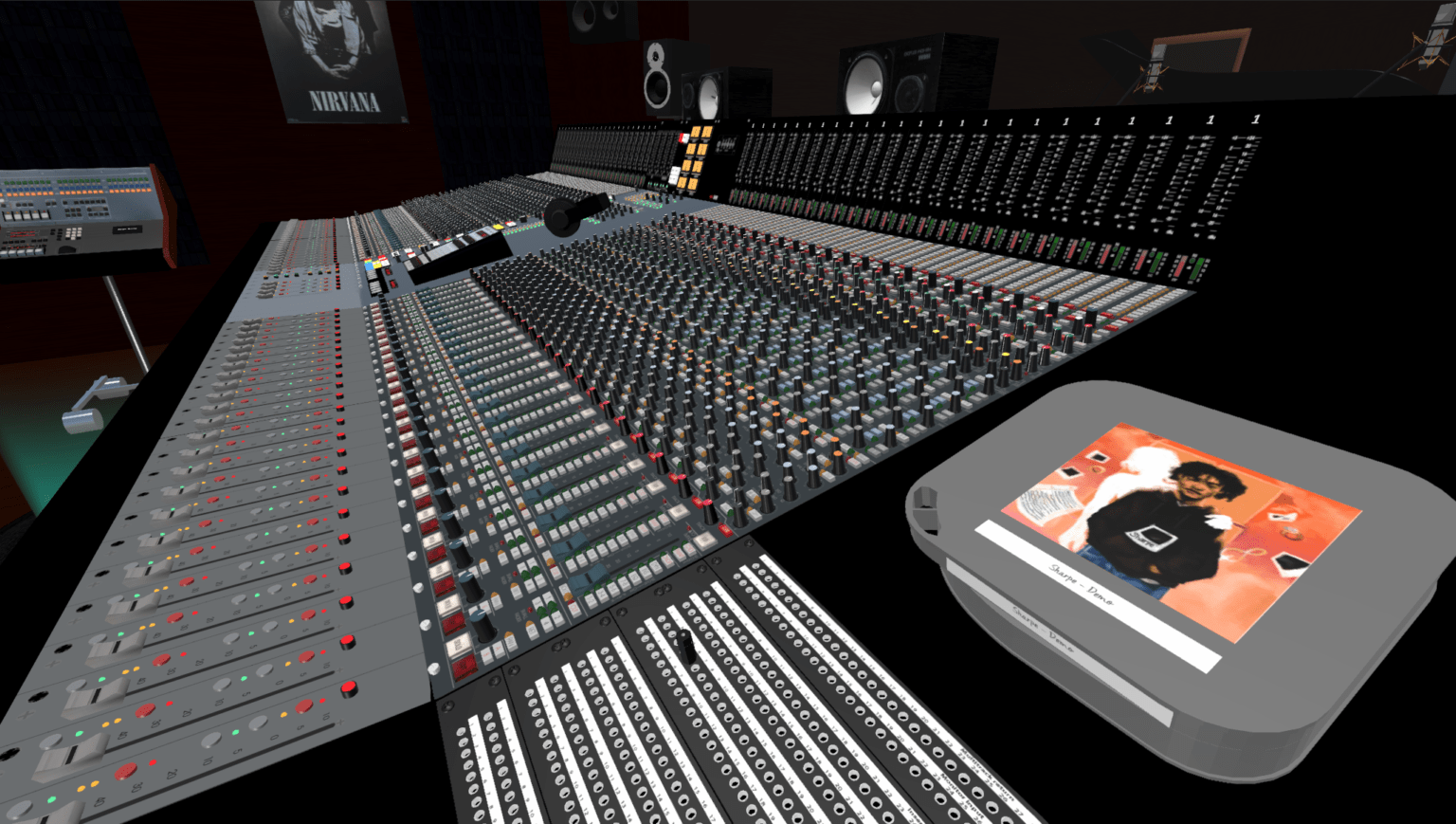

Fast forward to 2017, I decided to take my VR project to the next level and started working on a full-scale prototype in Unity3D/VR. But before I could begin, I had to decide what equipment to replicate in my virtual reality recording studio. I wanted the centrepiece to be the console, so I scoured the internet for inspiration. Initially, I had my heart set on building a Focusrite console, because of the documentary about these consoles, but I couldn’t find enough reference material to work with.

So, I turned my attention to another legendary console from another documentary (Sound City) – the legendary Neve console. However, the Neve consoles had a lot of variations, and I didn’t find enough documentation online to build them.

But, I did manage to find a pdf manual of the AMS Neve VR Legend, and that was it! It was already in the name that this particular console was destined to be the first VR console.

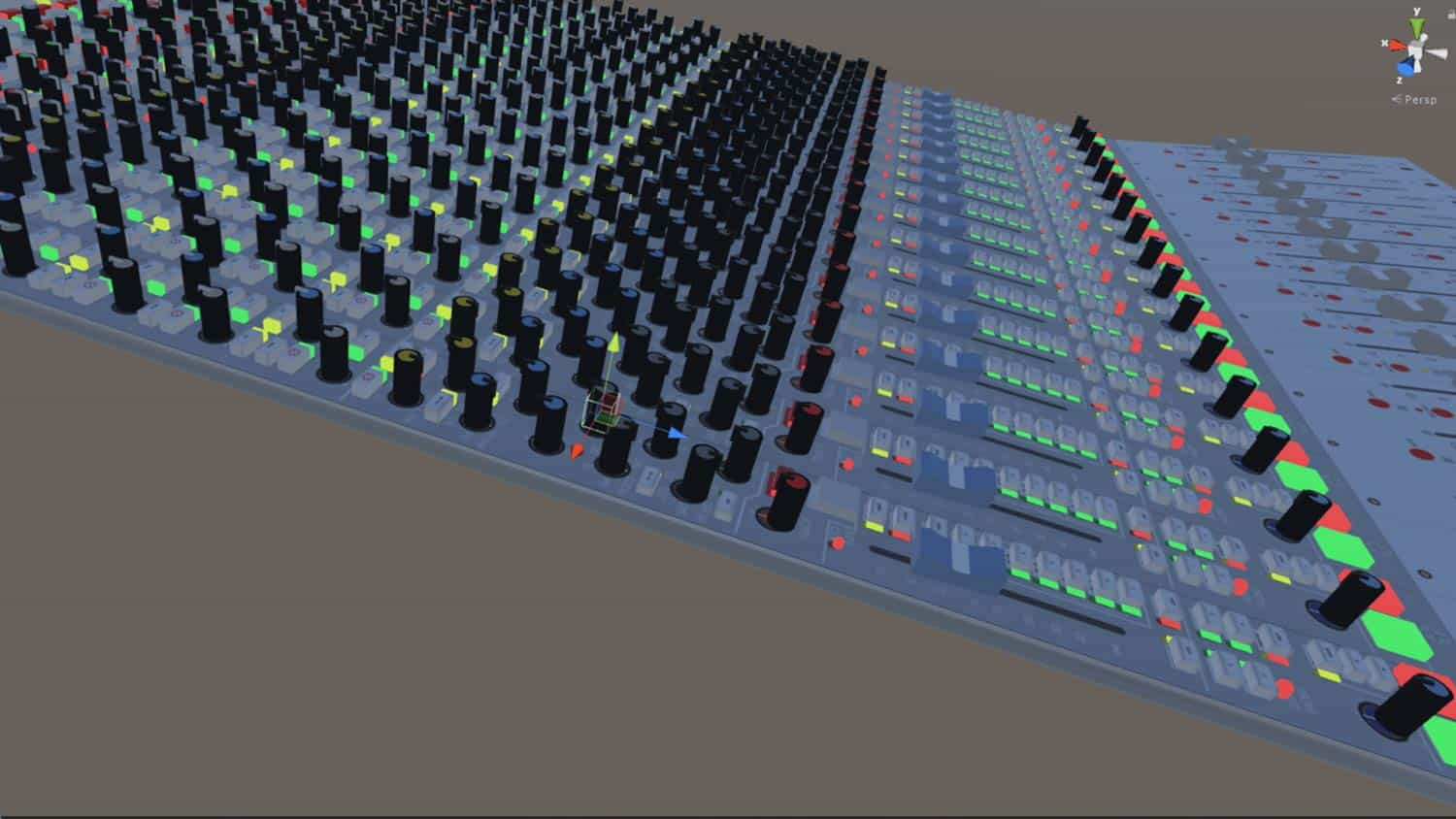

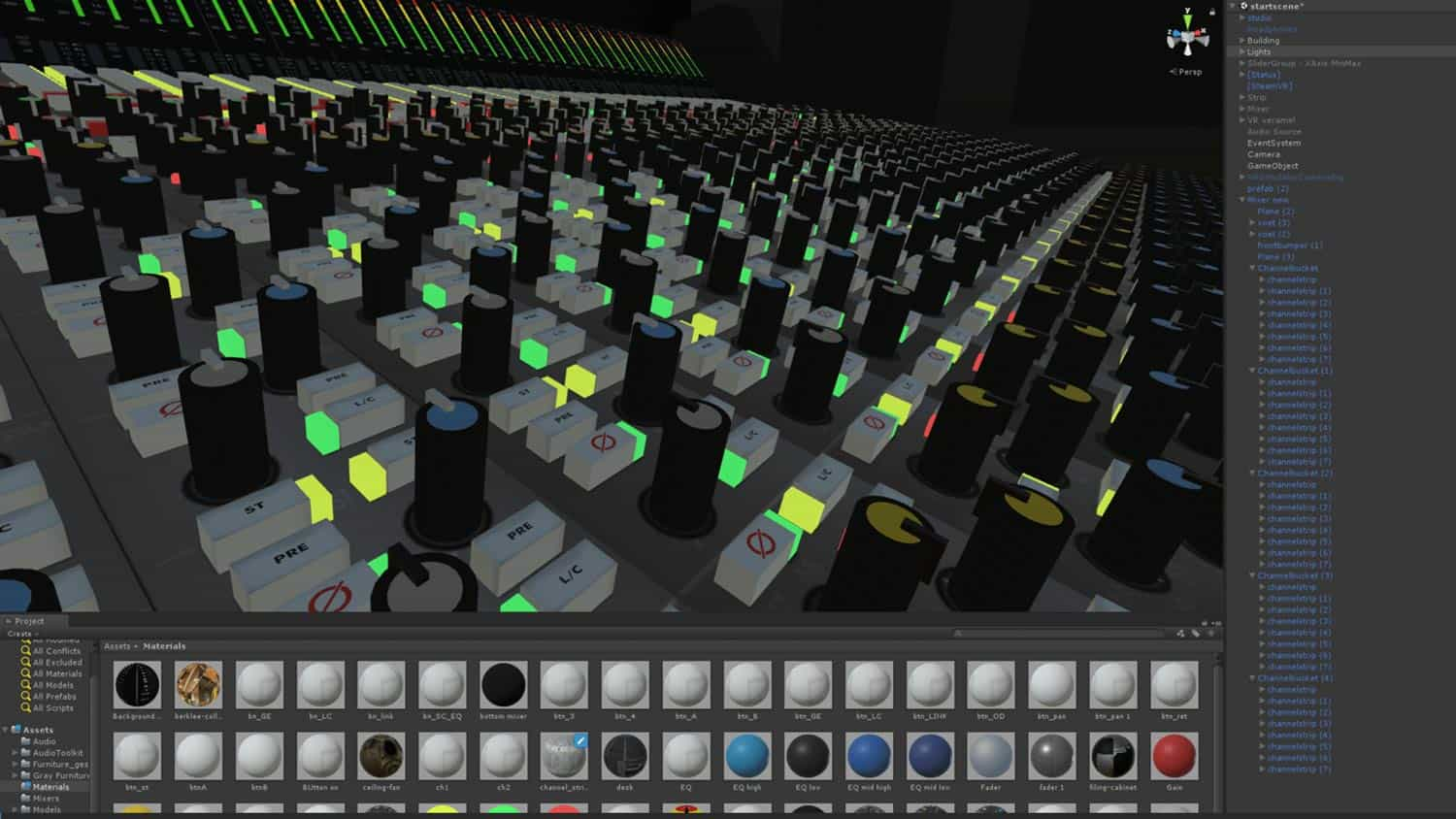

Building the console was the first step, so I started modelling and building it in 3D using pictures and drawings and then programmed it using the manual. It was a lot of work, but after a while, I had the basics of the console working. The level, EQ, and monitoring were all functional, and I had a virtual 24-track multitrack Studer as a wave/mp3 file player.

Of course, no recording studio is complete without a control room, so I quickly created a mock-up virtual control room to give a sense of space and scale when in VR. But then came the hard part – the following three challenges during development took the most amount of time:

Challenges of Creating the Virtual Reality Recording Studio

The first challenge of creating the Virtual Reality Recording Studio was a tough one! I had to optimise the graphics and code for VR so that it could handle rendering all the buttons and elements at a minimum of 90 frames per second on two screens. That’s a lot of work, especially with a console that has so many buttons and sliders. But after a lot of experimentation and tweaking, I was able to optimise the 3D models and use instancing and re-texturing objects to get it just right. This is still being optimised in current versions

The second challenge was all about reducing the audio CPU time. In my initial test in 2015, it was using too much DSP CPU power to be able to expand. So, I decided to learn a bit of C++ and modify the audio plugins for Unity to do parts of the processing in more efficient code, which allowed me to leave enough CPU power for VR. It was a lot of hard work, but I was determined to make it happen.

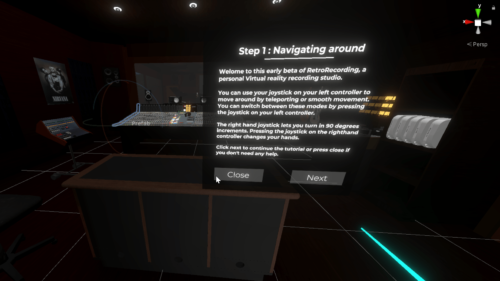

And finally, the third challenge was developing a natural VR interface to control the small buttons, sliders and pots with VR controllers. This was probably the most difficult challenge of all, as it went through many iterations before settling on the current implementation. I eventually settled on using haptic and visual magnification in conjunction with natural gestures like turning, sliding and pressing. It’s a natural and intuitive way to control the console in VR, and I’m proud of how it turned out.

Research

While on holiday I came across an article in an old tape-op magazine from the audio engineer and author John La Grou, who in 2014 predicted a similar VR application to be used in a studio environment.

“The future of audio engineering”

John la Grou – Tape Op magazine –

Following is an excerpt from his conclusion :

By 2050, post houses with giant mixing consoles, racks of outboard hardware and patch panels, video editing suites, box-bound audio monitors, touch screens, hardware input devices, and large acoustic control rooms will become historical curiosities. We will have long ago abandoned the mouse. DAW video screens will be largely obsolete. Save for a quiet cubicle and a comfortable chair, the large, hardware-cluttered “production studio” will be mostly a quaint memory. Realspace physicality (e.g., pro audio gear) will be replaced with increasingly sophisticated head-worn virtuality. Trend charts suggest that by 2050, head-worn audio and visual 3D realism will be virtually indistinguishable from real space.

Microphones, cameras, and other frontend capture devices will become 360-degree spatial devices. Postproduction will routinely mix, edit, sweeten, and master in head-worn immersion.

……. (removed content)

Future recording studios will give us our familiar working tools: mixing consoles, outboard equipment, patch bays, DAW data screens, and boxy audio monitors. The difference is that all of this “equipment” will exist in a virtual space. When we don our VR headgear, everything required for audio or visual production is there “in front of us” with lifelike realism. In

the virtual studio, every functional piece of audio gear every knob, fader, switch, screen, waveform, plugin, meter, and patch point will be visible and gesture controllable entirely in an immersive space.

Music postproduction will no longer be subject to variable room acoustics. A recording’s spatial and timbral qualities will remain consistent in any studio in the world because the “studio” will be sitting on one’s head. Forget the classic mix room monitor array with big soffits, bookshelves, and Auratones. Headworn A/V allows the audio engineer to emulate and audition virtually any monitor environment, including any known real space or legacy playback system, from any position in any physical room.

You can find the entire article in tape-op magazine archives or :

https://www.stereophile.com/content/audio-engineering-next-40-years

Development

So far the project has been self-funded. And while this was a fun work in progress, it didn’t create any income which limited the amount of development time I could spend on this project. To be able to dedicate

more of my time and resources to the development, It needs to have funding. Although I found quite a few interested people, none of them had been able to supply funding for this project at that date.

You can see on my portfolio page what the application could do at that time.

https://www.anymotion.nl/portfolio/vr-sound-engineer-retro-recordings/

Almost all development came to a halt at the end of 2018, as I needed to provide more income with paying jobs in video and photography, and I had less time to work on this project.

In April 2020, when normal work (photography, video editing, filming) came to a complete standstill due to the coronavirus, I had just learned about the new XR modules and ECS system for audio and data processing in Unity3D 2018 and 2020 versions. This has given me newfound energy to continue working on the project.

Current Status

Currently, I am in the process of rebuilding the entire Virtual Reality Recording Studio application and 3D models using the latest versions of Unity and Blender 3D. This is to ensure compatibility with all XR/VR headsets available on the market and offer a uniform interface across different devices. In addition, I am porting all the audio processing from a mix of C++ and C# to the new C# audio ECS in Unity3D. This not only expands the possibilities but also makes programming and managing the code a lot easier and more efficient.

In the next BLOG post, I’ll go further into possible use cases for this application, and how other developers can help with development.

#audiocomponentsystem #mixingconsole #development #ecs #audioengineer #mixing #netherlands #neve #Oculus #nevevrlegend #recording #soundengineer #soundcity #recordingstudio #unity3d #Virtualreality #Vive #xr #application #retrorecording